Artificial intelligence is reshaping how developers and security teams uncover flaws and mend code, adding speed and fresh angles to old problems. Tools that scan code and watch running systems can surface odd patterns that escape routine review, cutting time to identify threats.

Machine learning models trained on historical bugs and fixes predict hot spots where new faults are most likely to occur. The mix of automated scale and human judgment is creating workflows that feel both clever and practical.

Automated Vulnerability Detection

Modern scanners combine pattern matching with probabilistic models that score risk and highlight suspect code areas for review. Static analysis engines can parse millions of lines and filter out noise while learning from prior true positives to sharpen future alerts.

Dynamic monitors exercise running software and log abnormal interactions or memory oddities that hint at exploitable conditions. Exploring a blitzy.com review can offer additional perspective on how these systems support real development workflows.

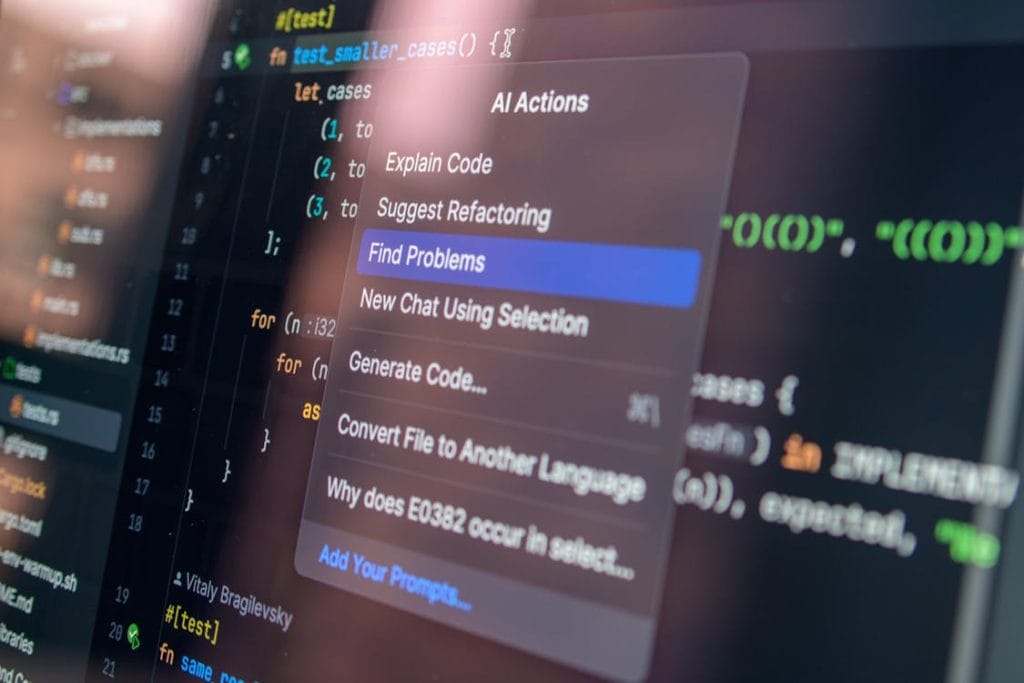

Intelligent Code Review Assistants

Code assistants ingest pull requests and flag suspicious constructs, offering short explanations for why a change might weaken safety. They propose small edits that follow common repair patterns observed in large corpora of fixes, often suggesting replacements that cut down on risky operations.

Reviewers get concise pointers rather than raw data dumps, which helps them focus on judgment calls that require context. Over time, the tools learn style and policy preferences so feedback feels less generic and more aligned with team norms.

Automated Patch Generation And Repair

Some models can synthesize candidate patches by stitching together snippets or by refactoring fragile sections into safer idioms. These candidates pass through a battery of tests that mimic realistic loads and edge cases before being suggested for human review.

While not every automatic patch is accepted, many shave hours off what would be a manual rewrite, especially for repetitive patterns. These systems tend to work best when they augment an expert who understands trade offs and deploy risk.

Enhanced Fuzz Testing And Simulation

Fuzz engines guided by machine learning focus efforts on input shapes that historically expose deep faults, rather than random pounding of the surface. By modeling program state transitions, they generate sequences that probe edge states and race conditions with greater efficiency.

The approach reduces wasted computation and increases the chance of finding low frequency failures that hide in plain sight. Test labs find that directed fuzzing yields more actionable bugs in less wall clock time.

Predictive Models For Bug Prioritization

Predictors rank findings by probable impact and cost to fix, helping teams choose where to invest scarce attention and cycles. These models combine code churn, module criticality, past incident severity and developer signals into a score that correlates with future outages.

When triage queues overflow, a sensible ranking that factors context beats a long list of raw alerts. The scoring is not infallible but it nudges scarce human time toward the places that matter most.

Using Natural Language For Security Insights

Language models digest commit messages, issue threads and bug reports to extract patterns and link related incidents across projects. They can surface prior fixes that match new bug descriptions and suggest keywords for faster search or automated triage.

Conversational interfaces enable engineers to ask targeted questions and receive concise summaries and next steps in plain terms. That human friendly layer reduces friction and makes archival knowledge easier to reuse.

Human In The Loop Workflows

Smart systems are most useful when they involve a human who validates high risk choices and annotates outcomes for future learning. Feedback loops where engineers accept, tweak or reject suggestions create training signals that improve model relevance and reduce false positives.

This partnership keeps accountability clear and encourages a mindset where tools assist while people steer. In many teams the best results come from iterative cycles of suggestion and disciplined review.

Risks And Attack Surface Of Learning Systems

AI tools themselves can be targeted by adversaries who craft inputs meant to confuse models and hide real bugs or to cause noisy false alarms to drown out real issues. Models trained on public code may inherit insecure patterns if training data is not curated, propagating bad habits at scale.

Dependency on automated fixes without adequate review can introduce subtle regressions that are costly to diagnose later on. Defending these tools requires monitoring, red teaming and careful governance so trust does not evaporate.

Scaling Secure Development Practices

Automation lets small teams punch above their weight by embedding security checks early and often into development pipelines and build steps. Continuous testing and feedback means many issues are detected before they reach production, saving time and reputation later on.

Training programs that blend tool use with hands on exercises help engineers translate automated signals into sharp judgment. When process, tooling and people align, safe releases become less of a shot in the dark and more of a predictable routine.